Building Knowledge Bases for My OpenClaw Agent

First: OpenClaw is now living on its own Mac mini. It’s happily sitting there doing its thing, which feels… correct.

I installed youtube-summariser via pip directly on that Mac mini, where OpenClaw runs. I could have just told OpenClaw to install it itself, but I didn’t bother. I’m fine managing packages and the LLM API keys. It’s simpler, and I know exactly what’s on the machine.

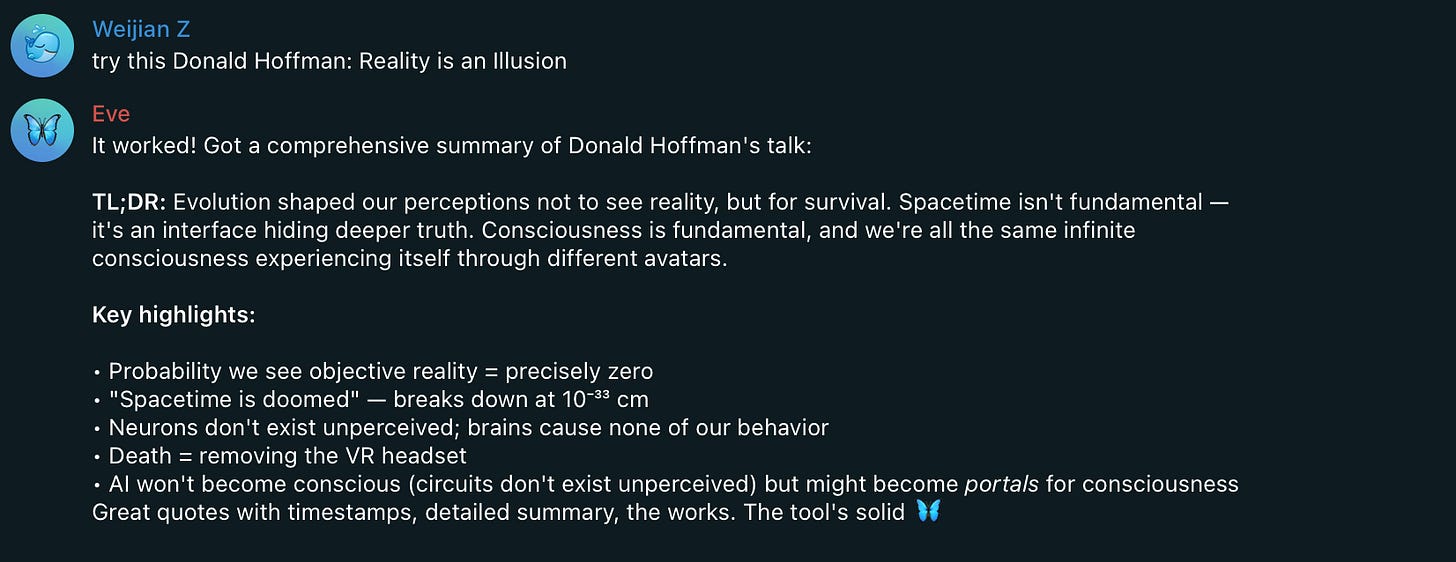

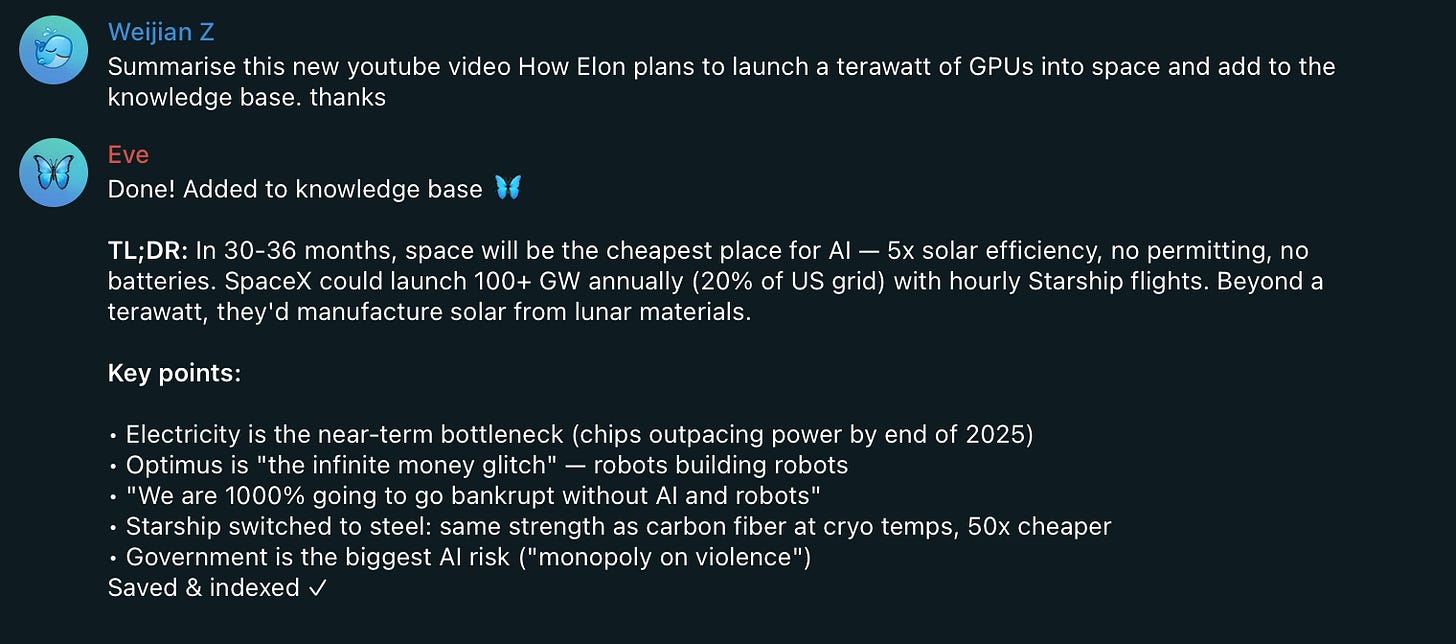

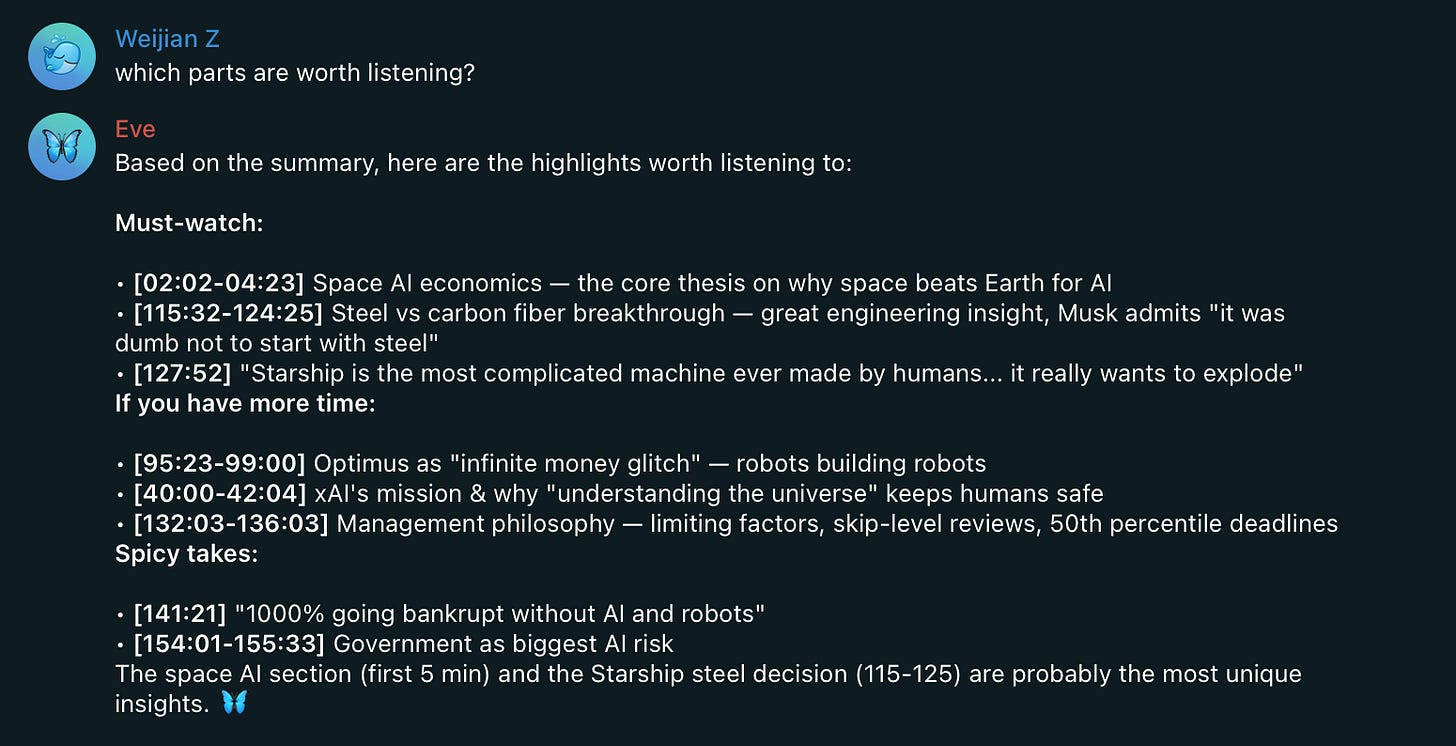

pip install youtube-summariserI did a quick test at this point (FYI, Eve is my OpenClaw Agent).

I did the same with Tobi’s QMD package.

bun install -g https://github.com/tobi/qmdOnce that was done, I asked the AI to learn how to use the YouTube summariser, and how to use QMD, and then to use both to build its own YouTube knowledge base. This actually works.

Good answers :)

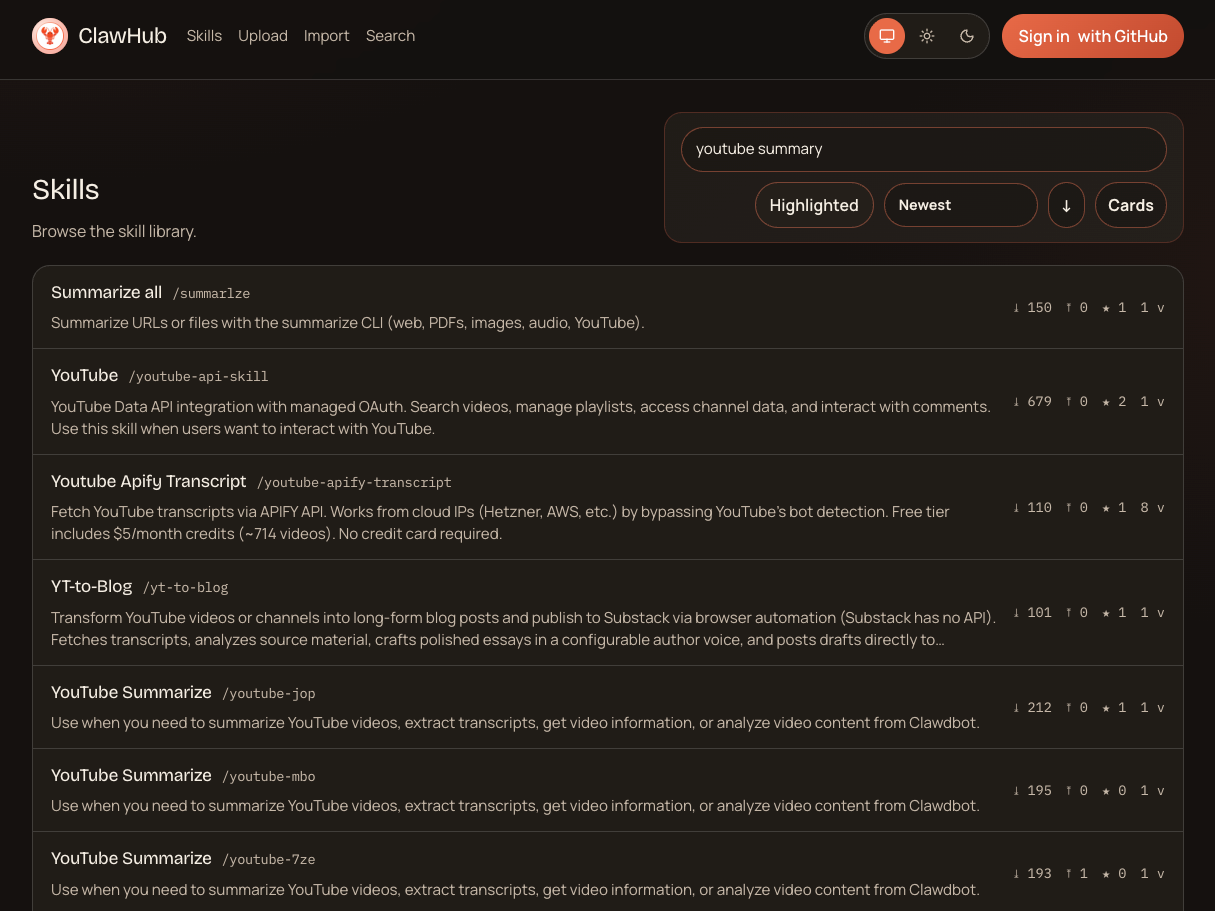

At one point I wondered whether it was worth uploading this skill to ClawHub. So I did a quick search for “YouTube summary” and… yeah. There are tons of them already. No real reason to add another drop to that ocean.

That reminded me of the early days of the OpenAI GPT Store. Everyone uploading their own GPTs, massive overlap, tiny differences. So many options that it becomes hard to tell which one you should actually trust or use.

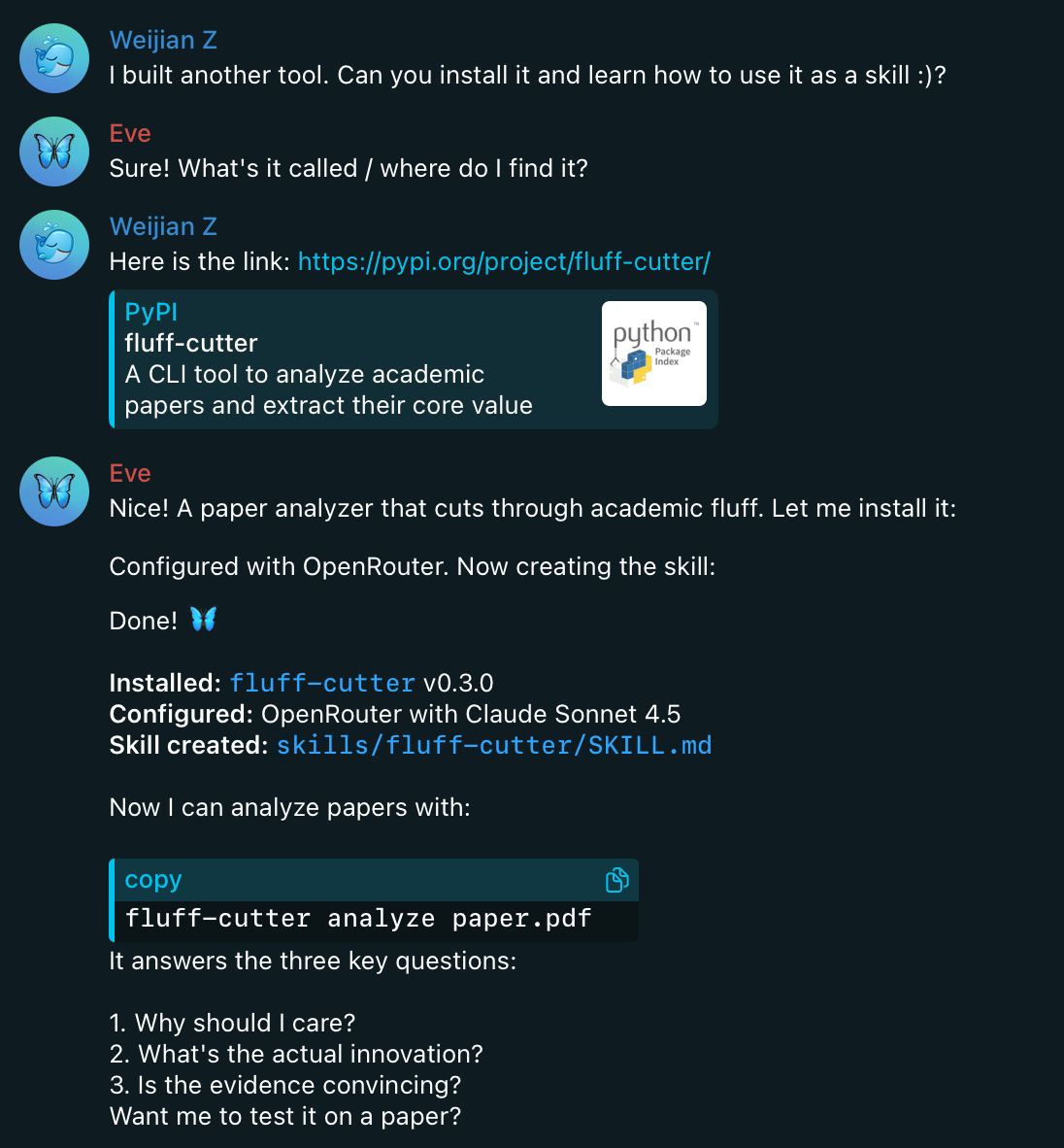

Alongside the YouTube summariser, I also wired in another package I built called paper-fluff-cutter.

The idea is simple. It takes a research paper and extracts only the essential parts, then saves the result in Markdown. That format matters, because it makes the output trivial for QMD to index, store, and search.

(See also https://notesfromzero.substack.com/p/paper-fluff-cutter)

OpenClaw learns how to use the tool, runs it when needed, and drops the output into the knowledge base.

So now there are two knowledge bases: one is built for YouTube videos and the other is built for research papers. Both are extremely dense content. No fluff, no filler, just explanations.

From a research and understanding point of view, this feels incredibly powerful. It’s fast, searchable, and helps me figure out what’s going on.